Azure

Azure

Azure Lighthouse why is it so important

Working for a Managed Service Provider (MSP) I have many times faced the challenges of managing multiple separate customers from one single pane. Whether it is a multi-tenant active directory, single AD or a vanilla Azure tenant. An MSP is only good when they can build tools to manage all customers in a streamlined fashion.

In the Microsoft sphere, partners and large enterprises have faced many of the same challenges. If you are a large enterprise, you might be eligible for an Enterprise Agreement.

As a partner, you can apply to become a (tier 1) Cloud Solution Provider (CSP). The tools provided are are far from good enough. The challenge is that you are still bound to tenant isolation. If you wanted to have a view of all alerts in Azure Monitor for all your customers. You need to create a tool that authenticates against each individual tenant and retrieves this information. Similar to what I did with SCOM.

Project Towboat

Last year I attended a side meeting for MSPs at Ignite. We discussed at scale management in the Azure Portal. We were promised that something called Project Towboat was planned. Since then it have been dead silent.

Out of the blue, Microsoft announced Azure Lighthouse. Promising simplified cross tenant resource management. So what makes this so great?

Delegated resource access

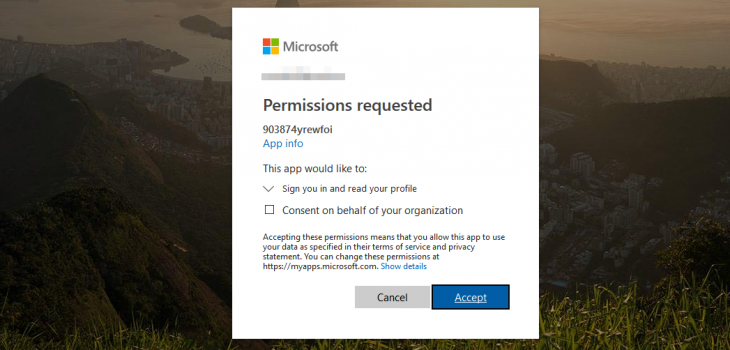

Azure Lighthouse uses delegated resource access. In essence, the customer establishes a trust with your (management/master) tenant. This allows for the users in the management directory (tenant) to manage resources on behalf of their customers. Many use Azure AD B2b to manage resources across multiple tenants. With Azure Lighthouse, you can do that without changing the context of the user.

In my opinion. Here are some of the features that make Azure Lighthouse so important to MSPs, and others managing multiple tenants.

Cross tenant monitoring in Azure

Azure Monitor is now multi-tenant. As long as the resource group or subscription is available for the person using Azure Monitor. Application and infrastructure monitoring is available from a single pane of glass.

Multi-tenant Log Analytics queries

Log Analytics is a part of Azure Monitor and is called Azure Monitor Logs, the engine behind is Log Analytics.

Log Analytics is already capable of searching within multiple workspaces. Since Azure Lighthouse will surface your customer’s workspaces, you can run cross tenant queries, how cool is that?

Azure security center for all customers

The beauty with delegated resource management just continues. Another great thing for your security team apart from Log Analytics is Azure Security Center is available in Azure Lighthouse. This means that the team (or that one person) can look at one single dashboard, or write the integration against one tenant.

Summary

With Azure Lighthouse greatly simplifies at scale and cross tenant management. Being tightly integrated with Azure Resource Manager for deployment, as well as Azure Monitor and Security Center for monitoring infrastructure and security.

I am really looking forward to creating solutions and working more with Azure Lighthouse. It is a long-awaited product, and with this launch, Microsoft is way ahead of its competitors.

Expect more dedicated posts on how to manage and automate using lighthouse in the future.

You can read more and find examples on the official Azure Lighthouse documentation and Azure Lighthouse github examples